Running Flaresolverr on AWS Lambda: A Serverless Approach

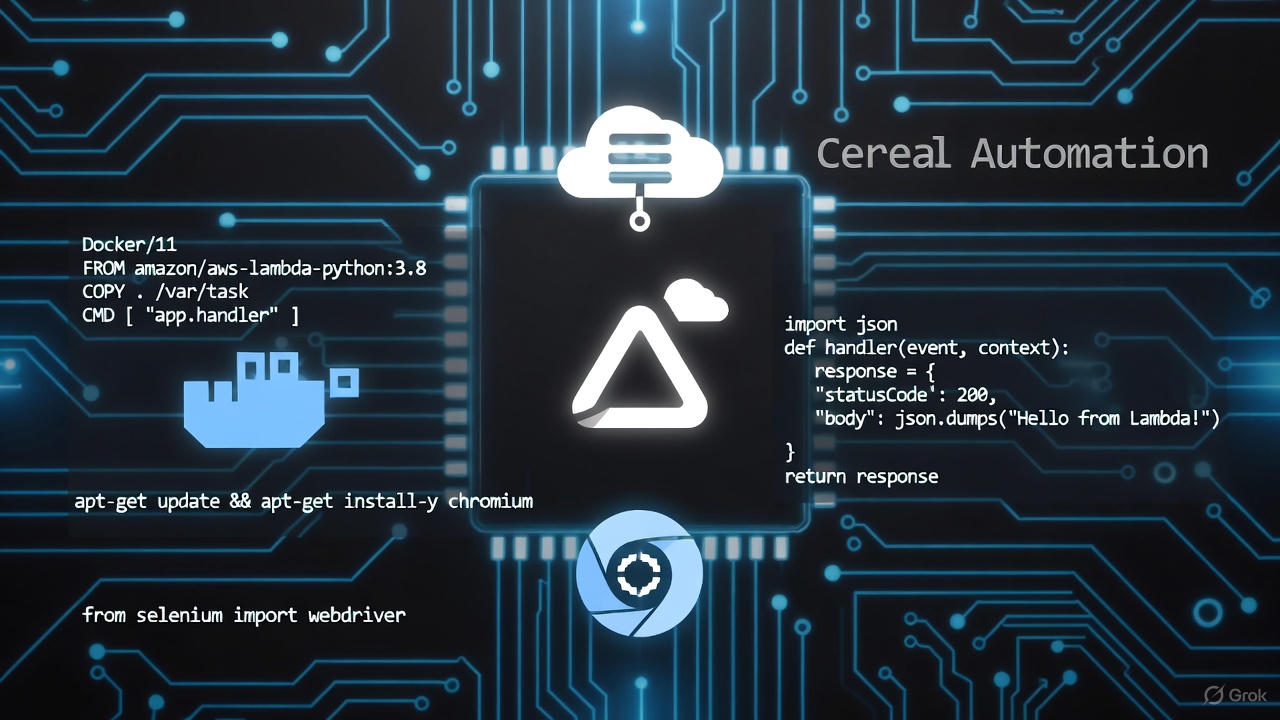

TL;DR: Running Flaresolverr as a persistent Docker container costs money 24/7. Moving it to AWS Lambda (ARM64, 3008MB, 45s timeout) turns it into a pay-per-use service that scales automatically and costs nothing when idle. Key changes: switch from Bottle to FastAPI + Mangum, containerize with Docker, and pass the right Chromium flags for the Lambda environment.

Flaresolverr is a proxy server for bypassing Cloudflare bot protection, widely used in automation and web scraping. The standard setup runs it as a long-lived Docker container — but that means paying for compute 24/7, even when you’re only using it occasionally. If you’d rather skip the infrastructure entirely and use pre-built automation scripts, Cereal handles Cloudflare bypass internally for supported scripts.

This post covers how to run Flaresolverr on AWS Lambda, turning it into a serverless, on-demand Cloudflare bypass that scales automatically and costs nothing when idle.

The Challenge: Running a Browser Inside Lambda

Getting a full browser like Chromium running in AWS Lambda isn’t straightforward. The environment imposes several constraints:

- Memory and CPU: Browsers are resource-hungry, and Lambda has limits.

- Filesystem: Lambda has a read-only filesystem except for

/tmp. - Execution model: Lambda functions are ephemeral — they don’t maintain state between invocations the way a long-running daemon does.

- Cold starts: Launching a browser from scratch adds latency on the first invocation.

Each of these requires a specific fix.

The Solution: Key Changes to Flaresolverr

1. Framework Switch: Bottle to FastAPI and Mangum

The original Flaresolverr used the Bottle framework. To make it compatible with Lambda’s event-driven model, we switched to FastAPI with Mangum as the ASGI adapter.

- FastAPI provides a high-performance web framework that handles standard HTTP locally.

- Mangum wraps the ASGI app so Lambda events are processed correctly in production.

This lets the same application run locally over HTTP and in Lambda without code changes.

2. Containerization with Docker

We moved to a container-based Lambda function to package all dependencies — including the browser and the AWS Lambda Runtime Interface Client (RIC) — into a single image.

Key Dockerfile changes:

- Base image:

python:3.11-slim-bookworm - Added

awslambdaricfor Lambda Runtime API integration - Included system dependencies required by Chromium

3. Browser Flags for the Lambda Environment

The most important part is configuring Chromium (via nodriver) to survive in Lambda. The required flags:

--headless=new— no display available in Lambda--no-sandbox— Lambda runs as a non-root user with limited privileges--disable-gpu— no GPU in Lambda--disable-dev-shm-usage—/dev/shm(shared memory) is restricted in Lambda--no-zygote— disables the zygote process to save memory and reduce startup time--disable-setuid-sandbox— additional sandbox disabling required in the Lambda environment

4. Serverless Framework Configuration

We used the Serverless Framework for deployment with these settings:

- 3008 MB memory: AWS Lambda allocates CPU proportionally to memory. Maxing out memory gives a full vCPU, which meaningfully speeds up browser startup and page loads.

- ARM64 (Graviton2): Cheaper than x86 and often faster for this type of workload.

- 45-second timeout: Enough headroom for browser startup and challenge solving.

Advantages Over a Long-Running Container

Automatic scaling. Lambda spins up a separate execution environment for each concurrent request. A burst of 1000 requests gets 1000 parallel invocations — no queuing behind a single container.

Pay per use. You’re billed for the milliseconds your code runs. For sporadic scraping, this is significantly cheaper than a 24/7 VPS or EC2 instance.

No server management. No OS patches, no uptime monitoring. Deploy the container image and you’re done.

Abuse prevention. We enforced mandatory proxy usage in the configuration so the Lambda’s own IP address isn’t burned by direct requests.

When to Use This Setup

This architecture makes sense when:

- Your Cloudflare bypass usage is sporadic rather than continuous

- You need horizontal scaling without managing infrastructure

- You want to avoid idle compute costs from a permanently running container

For high-frequency, low-latency use cases where cold starts matter, a persistent container may still be preferable.

Related Reading

For a deeper look at how Cloudflare bypass works at the technical level, see Bypassing Cloudflare with Browser Automation. For Datadome-protected APIs specifically, see How to Obtain Datadome Cookies for the Too Good To Go API.